- Diagnostics utility

- Changing the Private Cloud address

- Enabling HTTPS after installation

- Moving the Private Cloud instance to a different host machine

- Timeout: Socket is not established

- Runtime error: Out of memory

- The “Accept timed out” exception

- Models do not launch as if the instance is not activated

- Some components do not start after restarting the rest component

- The frontend component fails to start due to authentication failure

- The controller component cannot start other services

- Problem with the Docker image pulling

- The controller component log reports the exception after an update

- Enabling the Elastic Load Balancer HTTPS proxy support in the Cloud instance

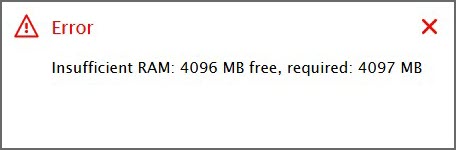

- The “Insufficient RAM” error

- An update of the SSL certificate is required

- Access to POI files is denied

- LDAP

- Problems with desktop AnyLogic

Applies to AnyLogic Cloud 2.8.0. Last modified on May 21, 2026.

This section covers various issues that you might encounter when managing Private Cloud and describes how to deal with them.

Issues can occur:

- During or after the Private Cloud installation

- When restarting service components

If you encounter a problem, you can review Docker logs, individual component logs, and installer logs to determine the cause of the problem.

To obtain the list of running Docker containers, execute the following command:

sudo docker psTo collect Docker logs from an individual container running a specific Private Cloud component, you can execute the following command:

docker logs %component name%If the command output is too extensive, try trimming it to the last 100 rows:

docker logs %component name% --tail 100The installation script logs are located in /tmp/alc_installer.log by default.

If you are experiencing a problem with the Private Cloud instance and none of the options below help, contact our support team at support@anylogic.com and provide them with the list of running containers and their logs to find a solution.

If you are experiencing a problem with the installation script, provide the list of running containers, as well as the logs of the controller component and installation script.

To obtain information about the instance and the system, consider using the diagnostics utility that has been included with Private Cloud since version 2.4.1.

The diagnostics utility is a bash script located in the tools subdirectory of your Private Cloud installation. This directory is created automatically during the initial installation or after an update.

After you run the diagnose.sh script without any parameters, it creates an archive in the /tmp/ directory. The resulting archive is named diagnose_alc_MMDDYYYYHHMM, where MMDDYYYYHHMM is the creation date and time, and contains the following:

- general_info.txt

-

Contains the number of the installed Private Cloud version and basic information about the instance.

- Cloud version — the raw contents of the /etc/altab file.

- Docker version.

- Docker proxy information — shows the environment variables applied for Docker with the systemctl show --property=Environment docker command. If the environment is empty (Environment=), no proxy is configured or it has not been applied yet, even if a proxy configuration file exists.

- Hardware information — collected by the lscpu and lsmem commands.

- Disk space — output of the df -h command.

- The usage of inodes — output of the df -i command.

- List of running containers — output of the docker ps command.

- List of running processes — output of the top command.

- The configs directory

-

Contains copies of Private Cloud configuration files, as well as:

- The Team License Server configuration (taken from <AnyLogic Cloud installation directory>/alc/controller/conf/license-server).

- The public SSL certificate of the instance (taken from <AnyLogic Cloud installation directory>/alc/controller/preload/frontend/).

- The infra directory

-

Contains the information about the environment infrastructure held in the infra-info.txt file, with output of the following commands:

- Basic OS information — if applicable, output of cat /etc/os-release or cat /etc/centos-release.

- SSH information — output of the ssh -V and systemctl status sshd commands, as well as the sshd configuration (taken from /etc/ssh/sshd_config).

- Listening ports — output of the ss -tlpn command.

- License server information — output of the systemctl status anylogic-tls.service command.

- SELinux information — output of the sestatus command.

- iptables configuration — output of the iptables -L -v -n command.

- Firewall status — output of the systemctl is-active firewalld.service command.

- Installed packages — output of either the apt list --installed or yum list installed commands.

- Host limits — output of curl for <controller host machine>:9000/limits

- User group membership — checks whether the user belongs to the docker group.

- Global file limits information — output of the prlimit and /proc/<executor PID>/limits | grep files commands.

- The logs directory

-

Contains logs for each Private Cloud container, as well as the kernel’s dmesg file. For each container, the logs are collected using the docker logs command. For example, for the controller container:

docker logs controller --tail 1000

The general Docker log is collected with the journalctl -u docker.service --since=today --no-pager command.

Kernel messages are collected using the dmesg -HP --since=today command.

The logs directory also contains a copy of the /tmp/alc_installer.log file, if it exists, as it may help identify issues that occurred during the installation process.

The Private Cloud instance address serves many purposes: it is used to open the product’s web UI, run models, verify the activation key, and so on.

If the instance has moved to another host, and you need to change the instance’s address, execute the update script with the --cloud_address flag:

./install.sh update --cloud_address new address

If HTTPS was not enabled during the installation of Private Cloud, you can enable it later by running the following command from the folder that contains the Private Cloud installation script:

sudo ./install.sh update --use_https y --https_key <path to the key> --https_cert <path to the certificate>

This command configures Private Cloud to use HTTPS with the specified private key and certificate.

For more information about the available installation script options, see Installer reference.

If you already have a Private Cloud instance installed, and you need to move it to a different host machine, follow these steps:

- Make a backup of the existing instance.

- In the Administrator panel, on the Status tab, drop your license key back to Team License Server.

-

Install a new instance of Private Cloud on the new host, using the same version of the installation script that you used to install the previous instance.

If you have lost access to the required version of the installation script, you can request it from our support team at support@anylogic.com. - On the new instance, use the restore script and apply the backup image you have created in step 1.

- Uninstall the outdated Private Cloud instance.

The log of the controller service component may report this issue for two main reasons:

- One of the ports required to run Private Cloud is closed

- iptables chains don’t include the DROP policy for SSH and Docker connections

To identify the problem:

- Open your Linux terminal.

-

Check that all the required ports are open and available:

22, 80, 5000, 5432, 5672, 9000, 9042, 9050, 9080, 9101, 9102, 9103, 9200, 9201, 9202

To do this, run one of the following commands:sudo lsof -i -P -n | grep LISTEN

For example, the lsof command returns a table consisting of lines that look something like this:

sudo netstat -tulpn | grep LISTEN

sudo nmap -sTU -O <%Private Cloud IP address%>

sshd 9406 root 4u IPv6 10473818 0t0 TCP *:22 (LISTEN)

In this line, sshd is the application name, 22 is the port, and 9406 is the process number. LISTEN means that the port is open and accepting new connections. -

Check the iptables configuration by running the following command:

iptables -L -v -n

If the entry containing the Private Cloud IP address contains DROP as target, this means that the SSH connection is being dropped and controller is unable to deploy Cloud properly.

Possible reasons for this error include an insufficient number of CPU cores on your host machine and a lack of available memory.

To avoid this problem, consider increasing the number of CPUs and memory by following the Private Cloud system requirements.

This error may occur when the model starts up. It typically indicates an issue with custom Java machine arguments defined in the configuration file of the executor component. Check that the specified arguments are valid, or try removing them. If the issue persists, contact our support team for assistance.

If your Private Cloud is not activated, you can still access the web interface of your instance. However, model execution is not available for a non-activated instance. If you try to run a model, the message appears: Activate AnyLogic Private Cloud to run the model.

If you are sure you have a valid license but still see this message when starting a model, it may indicate a problem.

-

Check the connection to your Team License Server. If Team License Server fails to start after rebooting the host machine, and the message address is already in use appears in the logs, make sure the server is running as a user that is not root.

-

Locate the alc/controller/conf/license-server file in the directory where your Private Cloud is installed (home/alcadm by default).

Within the file, make sure the Team License Server address is present in the following format: %the IP address of the server%:8443.

Do not change the content of the file: use the Status tab of the administrator panel to drop the license and lease it anew.

- After completing the above checks, execute docker restart rest in the terminal on the host machine. Wait a few minutes.

If these methods do not help, make sure that Team License Server is available for connection from the Cloud container. To do this, try the netcat (nc) utility, or telnet. For example, execute the following command:

docker exec -it controller /bin/sh

nc %the IP address of the server% 8443

%message%

If the command returns P, it means that Team License Server is available.

If none of this helps, get the log of the controller component log by running the following command on the machine that is running it:

docker logs controller --tail 100

Then contact our support team at support@anylogic.com and attach the log to your message.

This issue can occur on RedHat machines running Private Cloud. It results in the following behavior:

- Any attempt to run a model results in a 503 error visible in the web browser console

- Running the docker ps command results in an incomplete list of service components

- Some services fail to start because a required port is in use

- Ports cannot be freed even after a problematic service is stopped

Other errors can be found in the Docker logs, which are available by executing the following command:

journalctl -u docker

To resolve this issue:

-

Disable IPv6:

sysctl -w net.ipv6.conf.all.disable_ipv6=1

sysctl -w net.ipv6.conf.default.disable_ipv6=1 -

Stop all Docker containers:

docker stop $(docker ps -q)

Note that this will affect all Docker containers, including those used for purposes other than Private Cloud. Use with caution. -

Kill Docker processes:

systemctl docker stop

-

Restart Docker:

systemctl docker start

-

Restart the Docker container storing controller service component:

docker start controller

Other Private Cloud containers will start automatically.

This issue can be identified in the controller container logs, which you can access by executing the following command: docker logs controller. If the SSH authentication failure is the cause of the problem, you may start the frontend component manually:

docker start frontend

The other reason for this issue may be the restriction that prohibits execution of the scp command on the machine serving Private Cloud. You can also check this in the logs of the controller container.

If this is the case, manually copy the needed files to the cache folder:

sudo cp -r /home/alcadm/alc/controller/preload/frontend /home/alcadm/alc/cache

To make sure frontend doesn’t try to rewrite the copied files, rename the preload/frontend directory:

sudo mv /home/alcadm/alc/controller/preload/frontend /home/alcadm/alc/controller/preload/frontend.old

If frontend still does not start, do the following:

-

Identify the command used to start the frontend component in the controller component logs, which you can access by executing the following:

docker logs controller | grep "docker run " | grep frontend

-

After locating the command, execute it. It should look similar to the following:

docker run -d --name frontend --restart unless-stopped -v /home/alcadm/alc/cache/frontend:/etc/nginx/conf.d -p 80:80/tcp -e ALC_CONTROLLER_HOST=192.168.0.100 -e ALC_CONTROLLER_PORT=9000 -e ALC_NODE_ID=WaowUc4p6c-5WFZAo2tZxyFGsht12EM4w2D_QTxiP-o local.cloud.registry:5000/com.anylogic.cloud/frontend:2.3.0

After you execute the command, the controller component will start the frontend component automatically.

Sometimes the controller component is unable to start other service components after reboot, returning errors like Access Denied or Authentication Required, whereupon the manual start with the docker start command works for the services in question.

This is most likely due to a problem with the SSH connection and authentication, or some issues with Docker commands executed over SSH. To avoid this, make sure that:

- The SSH password of the Private Cloud administrator user (that is, alcadm by default) has not expired. If it has, update the configuration.

- SSH connections are allowed for the administrator user. Their access to SSH can be revoked after multiple unsuccessful connection attempts.

- The hosts.allow and hosts.deny configuration files in etc are configured correctly.

-

The user account (either alcadm, the default one, or a custom user) that set up Private Cloud is in the docker group by running the following command:

groups <user name>

If the user is not a member of the group, add them:sudo usermod -aG docker <user name>

Problem with ports

If controller is unable to start only some of the service components, make sure that the ports for the problematic services are open and available. See the list of the service components and their ports in the architecture description.

If it appears that there is no problem with the ports, try restarting the Docker daemon by executing the following command:

systemctl docker restart docker

Problem with encryption keys when upgrading to Ubuntu 22.04

This scenario assumes that you have working Private Cloud 2.3.0–2.4.0 on a machine running Ubuntu 18.04 or Ubuntu 20.04, and that you are using the Private Cloud 2.4.1 installation script. It also refers to the default Private Cloud installation location (/home/alcadm/) and user (alcadm). Adjust the credentials for your case if you are using custom.

Since Ubuntu 22.04 does not support RSA-SHA1 encryption by default, you need to specify another encryption for the Private Cloud instance. Follow the instructions below.

-

Upgrade Private Cloud to 2.4.1.

sudo ./install.sh update

-

Generate the encryption keys:

ssh-keygen -q -t ed25519 -a 100 -m PEM -f "/home/alcadm/alc/controller/keys/id_ed25519" -C "" -N ""

-

After generating the key, make sure the alcadm user has permissions to read the generated keys.

sudo nano /home/alcadm/alc/controller/nodes.json

In the text editor, replace id_rsa with id_ed25519 and save the file.

The resulting file should look like this:{ "host" : "10.0.103.73", "sshAccess" : { "method" : "PRIVATE_KEY", "user" : "alcadm", "key" : "id_ed25519" } } -

Place the keys in the authorized key store:

cat /home/alcadm/alc/controller/keys/id_ed25519.pub >> /home/alcadm/.ssh/authorized_keys

-

Restart the controller component:

sudo docker restart controller

- After restarting controller, proceed with the upgrade to Ubuntu 22.04. After successful upgrade, Private Cloud will work with new encryption keys.

If a host machine connects to the web via a proxy server, it might incorrectly handle the connections to the Docker registry and Private Cloud local registry.

To avoid this, you must configure Docker in a way that will allow it to use an HTTP or HTTPS proxy and to connect to the registry-1.docker.io URL and connect to the Private Cloud registry without proxy. To do this, use the NO_PROXY flag:

[Service]

Environment="HTTP_PROXY=http://proxy.example.com:80/"

Environment="HTTPS_PROXY=http://proxy.example.com:80/"

"NO_PROXY=localhost,127.0.0.1,local.cloud.registry"

This issue occurs after updates are performed on an existing Private Cloud instance. A migration issue in the controller component can force the rest component to restart very frequently. To identify this, check the log of the container running controller with the following command:

docker logs controller

The output should look something like this:

2021-04-16 13:11:56:453 ERROR CONTROLLER - 2021-04-16T13:11:56.453 - migration REST: com.anylogic.cloud.migration.MigrationException: liquibase.exception.LockException: Could not acquire change log lock. Currently locked by 9ac02ab910d0 (172.17.0.8) since 4/8/21 11:02 PM

Additionally, the rest container log (run docker logs rest to open it) reports another error:

Error starting ApplicationContext. To display the auto-configuration report re-run your application with 'debug' enabled.

[main] ERROR org.springframework.boot.SpringApplication - Application startup failed

The most likely cause of this problem is some kind of critical failure (such as a power outage) that occurred during the execution of the update script.

To clean up the affected files and resolve the issue, do the following:

-

In the container running postgres, remove databasechangeloglock:

docker exec -ti -u postgres postgres psql anylogic_cloud -c 'truncate databasechangeloglock;'

-

Restart controller.

docker restart controller

An Elastic Load Balancer is an automatic distributor of incoming traffic provided by Amazon for its EC2 instances and containers. Among other things, it also has built-in HTTPS proxy support. When being used, this proxy may cause connection issues due to conflicts with the HTTPS implementation in Private Cloud.

If you decide to use the Load Balancer for your Private Cloud instance, you can avoid these issues by doing the following:

- Start the normal installation routine for Private Cloud.

- When asked whether you want to enable the HTTPS support, type n (as in, disable it).

- Complete the installation routine and start Private Cloud.

- Configure your Load Balancer as you see fit using the instructions provided by the Amazon documentation.

-

Then manually enable the HTTPS support in the appropriate configuration file, public, by replacing the protocol in the value of the gatewayHost field:

public: gatewayHost : "https://10.0.0.1:8080" -

Restart the rest component:

sudo docker restart rest

After this procedure, your Private Cloud instance should normally handle the traffic coming from the Elastic Load Balancer.

sudo ./install.sh update --use_https nThen repeat steps 5-6 from the instruction above.

This issue may occur when you start the model animation (by clicking the Play button on the model screen or on the experiment toolbar). The same issue may occur when starting the model from the AnyLogic Cloud UI without animation.

The error message might look like this:

The reason is one of the following:

- The model is trying to use more RAM than the machine that serves Private Cloud has.

- There are no available CPU cores on the machine that serves Private Cloud.

On the public version of AnyLogic Cloud, this usually means that you do not have permissions to launch a model of this size. If this is the case, consider subscribing.

To identify the problem, go to the Running Tasks section of the administrator panel. If all executor components there are busy, there are no free CPU cores available, and you will have to wait until another model completes its execution.

If there are free executor components, this means that you need to change the amount of RAM required to run the model:

- Go to the experiment dashboard.

- Click in the left sidebar next to the needed experiment.

-

In the Experiment settings section of Inputs, locate Memory, MB / Memory per single run, MB.

This option defines the maximum size of the Java heap allocated for a single run, for the model and the built-in DB. - Click to make this option visible on the experiment dashboard.

- Click on the left sidebar.

-

Now, on the experiment dashboard, change how much memory is allocated.

The amount of memory allocated should be no more than half of the total RAM available on the machine serving Private Cloud minus the RAM allocated for the models currently running.

To replace the outdated SSL certificate used by Private Cloud for HTTPS connections:

-

Go to the home directory of Private Cloud. Its default location is as follows:

/home/alcadm

-

Once there, go to the following directory:

alc/controller/preload/frontend

- Remove the existing alc.key and alc.crt files.

- Place your new key file and certificate file (if applicable) in the directory. Rename them to alc.key and alc.crt respectively.

-

Restart the frontend component:

- Go to the Services section of the administrator panel, select frontend there, then click Restart service, or

-

Execute the following command in your Linux terminal:

sudo docker stop frontend

After some time, the frontend component will restart, and your Private Cloud will switch to the new HTTPS certificate.

When running a model in Private Cloud, an error may occur due to missing permissions to access the /tmp/poifiles/ directory on the executor machine. The error message may look like this:

java.lang.RuntimeException: Couldn't collect outputs from the model.

…

Caused by: java.nio.file.AccessDeniedException: /tmp/poifiles/poi-sxssf-template14966110944603305588.xlsxThis occurs because the Apache POI library, used for Excel export, attempts to create temporary files in the /tmp/poifiles/ directory. In some environments, the model process does not have the required permissions for this directory.

If this issue persists, contact your system administrator for assistance with permissions.

new File("./tmp").mkdirs();

org.apache.poi.util.TempFile.setTempFileCreationStrategy(new org.apache.poi.util.DefaultTempFileCreationStrategy(new File(System.getProperty("user.dir") + "/tmp")));In case LDAP/AD connectivity is enabled and LDAP/AD credentials not working upon authentication, check whether the needed provider is specified in the registration configuration file.

Ensure the address of the LDAP/AD server has been specified correctly, in the ldap or ad configuration file respectively.

If the issue persists, check the logs of the rest component for responses to the /login request by executing the following command:

sudo docker logs rest 2>&1 | grep -i login

What follows is the list of several possible issues and their causes:

-

INFO com.anylogic.cloud.service.rest.user.auth.ActiveDirectoryLdapAuthenticationProvider - Active Directory authentication failed: User was not found in directory

This message appears when the user who tries to log in does not originate from Active Directory. Consider re-checking the credentials the user has provided. If the profile does not exist in the Active Directory database, the AD server administrator must create one. -

WARN org.eclipse.jetty.server.HttpChannel - /login java.net.ConnectException: Connection timed out (Connection time out)

This message appears when the instance cannot establish a connection with the LDAP/AD server. In that case, If this does not help, contact the administrator of the server. -

WARN org.eclipse.jetty.server.HttpChannel - /login java.net.ConnectException: Connection refused (Connection refused)

This message appears when the instance connects to the LDAP/AD server but the connection has been blocked by a firewall or some other utility. In that case, contact the server’s administrator. -

INFO org.springframework.security.ldap.authentication.ad.ActiveDirectoryLdapAuthenticationProvider - Active Directory authentication failed: Supplied password was invalid

This message appears if the user has provided the incorrect password with the Active Directory login enabled. You can try to identify the issue by enabling advanced debugging information:- Open the applications configuration file.

-

Locate the object that defines the rest service component settings (identifiable by name: rest), and append the following to the javaArgs field value:

-Dorg.slf4j.simpleLogger.log.org.springframework.security.ldap.authentication.ad=DEBUG

As a result, the javaArgs field should look similar to this:javaArgs : ["-Dorg.slf4j.simpleLogger.log.org.springframework.security.ldap.authentication.ad=DEBUG"]

- Save and close the configuration file.

-

To apply changes you have made in the configuration files, stop the controller and rest components by executing the following command in the Linux terminal on the machine that serves this component:

sudo docker stop controller rest

-

After that, start the controller component manually:

sudo docker start controller

- Wait until the rest service component is restarted by controller.

- Try to log in using the Active Directory user credentials.

If the issue persists, check the logs of the rest component for responses to the /login request by executing the following command:

sudo docker logs rest 2>&1 | grep -i login

- With the advanced debugging information enabled, you will receive an error code identifying the issue. For the list of possible error codes, see LDAP Result Code Reference.

-

Upon unsuccessful LDAP/Active Directory user login, the following message may appear in the rest service component login:

ERROR org.hibernate.engine.jdbc.spi.SqlExceptionHelper - ERROR: invalid byte sequence for encoding "UTF8": 0x00

Where: unnamed portal parameter $2

If this is the case for you, do the following:- Check that the user’s login and password do not contain null characters.

-

Check that Private Cloud fields are not mapped to binary LDAP/Active Directory fields:

-

Access your PostgreSQL database and execute the following query:

select * from users where provider='type'

Replace type with the short name of the provider you use (either ldap or ad). -

Execute the search via the ldapsearch interface:

ldapsearch -H server address -b 'user search base' -D Cloud user name -W '(user search filter)' field

In this request, server address is your LDAP/AD server address, user search base is the search base (the location in the LDAP/AD directory where the search for the requested object begins), Cloud user name is the user name of the Cloud LDAP/AD user, user search filter is an additional matching rule, and field is one of the fields mapped between Cloud and the LDAP/AD server (objectguid, sn, givenName, mail, or else).

Consider the following example:ldapsearch -H ldap://example.com -b 'dc=example,dc=local' -D sample.user@example.com -W '(sAMAccountName=sample)' objectguid

After executing this search, the following result is returned:

objectGUID:: m4n5N+8u+kapipIWeE0NEhg==

The presence of the :: characters means that the field is binary. Use a special symbol (b:) to map it in Private Cloud.

-

Access your PostgreSQL database and execute the following query:

-

If the domain names specified in the usernames of authenticating users differ from the domain names used by the Active Directory server, you can explicitly specify the distinguished root name in the rootDn field of the ad configuration file:

ad: serverAddress: "ldap://ad.example.com" domain: "domain.example.com" rootDn: "dc=example,dc=com" # The explicitly specified root distinguished name is used to handle the difference in the domain name. … -

LDAPS: The rest logs display a certificate exception:

java.security.cert.CertificateException: No subject alternative names present

This usually means the certificate lacks a proper SAN (subject alternative name). To resolve this issue, consider one of the following options:- Replace the certificate,

- Make sure the connection in the ldap configuration file specifies a proper domain name rather than an IP address,

-

Specify the following Java argument for the rest component:

-Dcom.sun.jndi.ldap.object.disableEndpointIdentification=true

In case this information did not prove useful to you, contact our support team at support@anylogic.com. To your letter, attach the rest component log, obtainable by executing the following command:

sudo docker logs rest --tail 200

This problem may occur when you log in to Private Cloud using the toolbar button or the wizard. Upon a login attempt, an error message appears:

Network error: PKIX path building failed:

sun.security.provider.certpath.SunCertPathBuilderException: unable to find

This means that the SSL certificate validation failed: AnyLogic suspects a man-in-the-middle (MITM) attack and aborts the login process.

There are two common reasons for this:

- AnyLogic is redirected to a proxy,

- The root certificate is missing in Java Runtime Environment (JRE) of AnyLogic.

In both cases, a system administrator should check the AnyLogic and network configuration.

For system administrators:

- You can find the proxy settings in the main menu of AnyLogic, under Tools > Preferences > Connection.

-

The certificates are stored in the cacerts file located in the AnyLogic installation directory. For example, in Windows, the path may look like this:

C:\Program Files\AnyLogic 8.9 Professional\jre\lib\security\cacerts

Check if your certificate is there. If this is not the case, add the certificate using a suitable tool, for example, Portecle or the keytool utility included with Java.

A PowerShell command might look like this:.\keytool.exe -cacerts -alias MyCert -import -file C:\path\to\certificate.crt

The default keystore password is changeit (unless modified).

To modify the cacerts file, run PowerShell or the command prompt as administrator.

You can also export the certificate using your browser. -

Another option is to edit the AnyLogic.ini file, located in the AnyLogic installation folder, and specify the keystore path explicitly. Add these two lines to the end of the file:

-Djavax.net.ssl.keyStore=PATH/TO/YOUR/KEYSTORE -Djavax.net.ssl.keyStorePassword=PASSWORD

-

How can we improve this article?

-